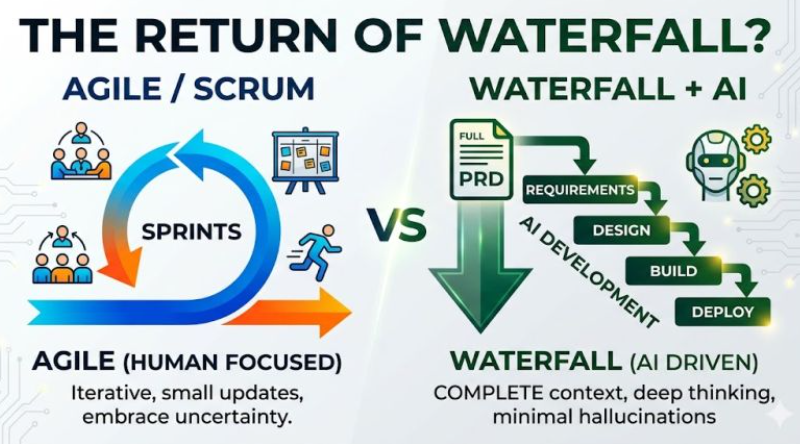

For the past few months, I’ve been seeing a new narrative:

“AI is bringing Waterfall back.”

Below is the example of the post from Sanath Kashyap one of my linked In connections

At first glance, it sounds convincing. AI works better with full context. Complete requirements. Clear instructions.

So the conclusion seems obvious:

Write everything upfront → feed it to AI → get the result.

Simple.

Except it doesn’t work like that in the real world.

The Assumption That Breaks Everything

The whole argument depends on one assumption:

That complete requirements exist.

They don’t.

Not in complex systems.

Not in real companies.

Not in multi-team environments.

Try asking any stakeholder:

- “Is this the full scope?”

- “Are we sure nothing will change in 6 months?”

They won’t say yes.

Not because they’re bad.

Because they don’t know.

Projects Are Not Built in Isolation

The “AI → full PRD → build everything” model only works if:

- your system is isolated

- your dependencies are stable

- your environment doesn’t change

That’s almost never the case.

Real projects depend on:

- other teams

- external systems

- regulations

- evolving business priorities

You don’t control all of that.

And AI doesn’t magically remove that complexity.

AI Doesn’t Eliminate Uncertainty

Yes, AI prefers full context.

But what happens when:

- part of the data is missing?

- requirements are unclear?

- decisions haven’t been made yet?

AI fills the gaps.

That’s called hallucination.

So instead of reducing risk, you’re just:

→ shifting uncertainty into generated output

And trusting it.

This Is Where Agile Still Matters

Agile was never about sprints.

It was about dealing with uncertainty.

- build → learn → adjust

- reduce risk early

- adapt to new information

That problem hasn’t gone away.

If anything, AI makes it more dangerous to ignore.

Because now you can generate a lot of output very quickly…

based on assumptions that might be wrong.

Where the “AI = Waterfall” Idea Actually Works

There is a scenario where this thinking makes sense:

If:

- the problem is well-defined

- the system is simple

- the environment is stable

- requirements are truly complete

Then yes:

→ write everything upfront

→ let AI execute

But let’s be honest:

That’s not most software.

You Don’t Replace Agile. You Change How You Use It

What actually changes with AI:

- You can process larger context faster

- You can re-plan quicker

- You can prototype instantly

But you still:

- don’t know everything upfront

- operate in a changing environment

- depend on human decisions

So instead of:

Waterfall vs Agile

The real shift is:

AI-accelerated iteration

- define what you know

- use AI to explore faster

- validate with reality

- adjust continuously

The Real Risk Right Now

The biggest mistake is not “using Agile”.

The biggest mistake is:

Thinking AI removes the need to deal with uncertainty.

It doesn’t.

It just makes it easier to ignore it.

Final Thought

AI rewards clarity. That part is true.

But clarity doesn’t mean:

→ pretending we know everything upfront

It means:

→ being explicit about what we don’t know

→ and designing how we learn it

That’s not Waterfall.

That’s just good thinking.